I recently read Spec-Driven Development by Haim Michale, and it resonated with some day-to-day thoughts about SDD.

The problem SDD aims to solve isn’t new

Missing, incomplete, and ambiguous requirements that evolve over time have always plagued software delivery, but it is now amplified: the pace is faster, LLMs have a strong tendency to fill gaps by guessing, and they carry zero tribal knowledge – no kitchen-table conversations, no “oh, we tried that in 2022” instincts. EARS syntax (Easy Approach to Requirements Syntax) is an interesting framework for making requirements more machine-readable, but it still requires a human to steer it. The spec is only as good as the intent behind it.

Legacy code and brownfield projects are the elephant in the room

Most SDD content assumes you’re starting fresh. But the vast majority of engineering work happens in systems with years of accumulated decisions, undocumented assumptions, and implicit context baked into the codebase. Making that accessible to coding agents, let alone to SDD, is a real and largely unsolved problem. As far as I’m aware, there are no clear best practices or guidelines here yet. This is an area we need to tackle head-on.

SDD also accelerates a role blur we’re already talking about

If specs are first-class artifacts that live in the repo, who writes them? Do PMs start pushing requirements documents directly into codebases, expanding into territory traditionally owned by engineers? Do developers take on deeper responsibility for refining and maintaining specifications? Both are plausible. Neither is cost-free. The boundary is getting blurry fast.

And drift…

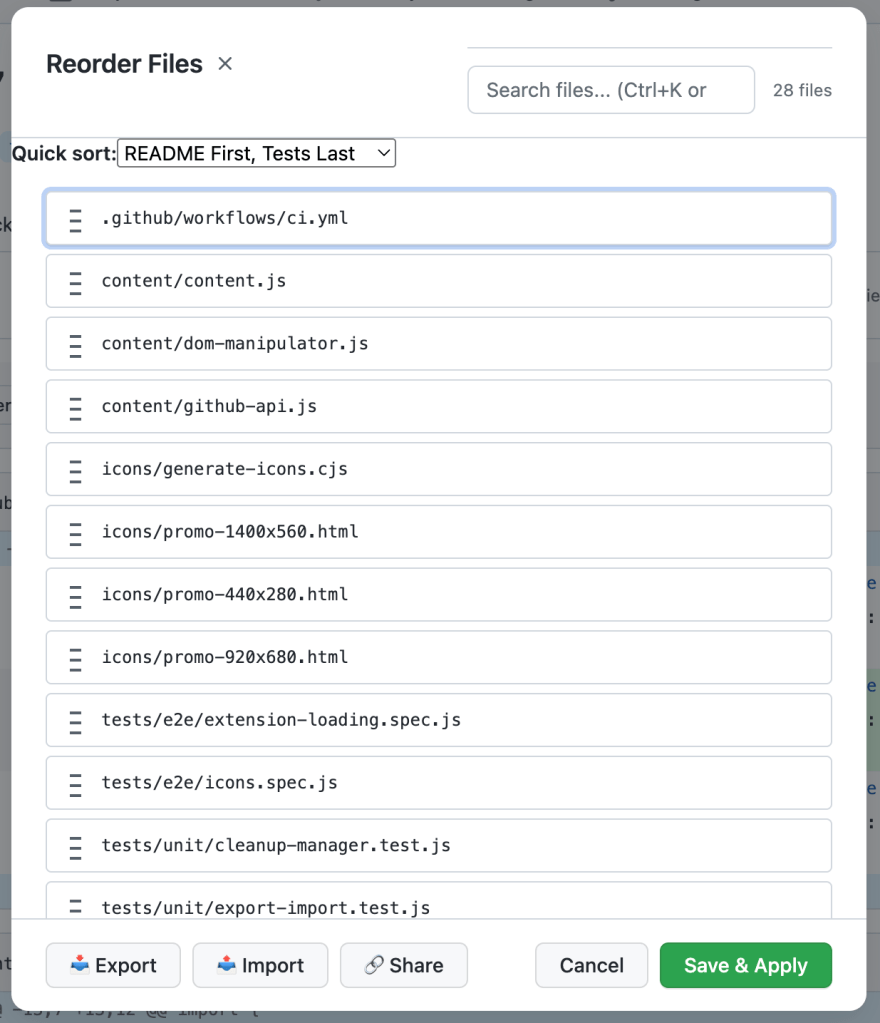

Drift is what happens when specs and code silently diverge: a requirement changes, but the spec file doesn’t, or a PR lands and the corresponding document isn’t updated. Over time, specs stop describing the system, and that’s arguably worse than having no specs at all, because they create false confidence. My suggestion: drift detection should be treated as a core part of repo gardening. Flag when code changes without a corresponding spec update, and vice versa. Make it visible, not something that accumulates quietly in the background. Expect spec debt terminology coming soon.

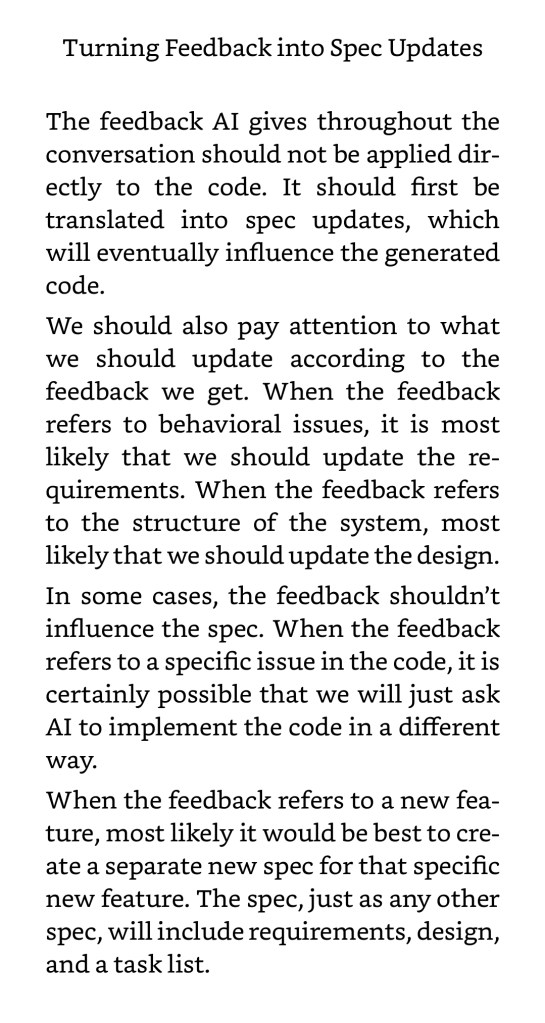

While different SDD methodologies vary in the exact documents they produce, I found the attached screenshot a useful cheat sheet for knowing when to update which document.