I finished listening to “The Great CEO Within” by Matt Mochary and Alex MacCaw. A few thoughts:

1️⃣ A tactical cheat sheet

I view the book as a tactical cheat sheet: short, practical chapters you can skim for ideas. It’s great for quick exposure, and most chapters include references for deeper dives. For me, this book has excellent value for time.

2️⃣ Revisit in the LLM era

Two well-known ideas in the book are Getting Things Done and Inbox Zero.

Inbox-zero and productivity advice hit differently today. With LLMs helping triage emails, summarize threads, and highlight what actually matters, the principles remain the same – but the execution is far more automated.

3️⃣ Optimizing meetings

TL;DR: come prepared, use written communication in advance, and don’t deviate from the planned agenda.

The authors suggest holding all meetings, including 1:1s, on the same day. From my experience, for 1:1s that go beyond status updates and require real attention (e.g., feedback), stacking too many of them on the same day can be overwhelming for most people.

4️⃣ “The first thing to optimize is yourself”

One of my favorite quotes from the book. It emphasizes founders’ and leaders’ health, mental and physical, something that has historically been overlooked. A good reminder that sustainable leadership starts with managing your own energy.

The book also mentions principles of conscious leadership: listening to feedback and acting on it, and not being afraid to make (and admit) mistakes.

This week, I also read a blog titled “Reflection is a Crucial Leadership Skill”, which made these ideas more actionable and down-to-earth.

What should I read next?

Category: Uncategorized

Learn in Public – week 02

I started this week with deeplearning.ai’s course on semantic caching, created in collaboration with Redis. That sent me down a rabbit hole, exploring different LLM caching strategies and the products that support them.

One such product is AWS Bedrock Prompt Caching. If large parts of your prompts are static (specifically, the prefixes), retokenizing the prefix on every request is a waste of time and money. Prompt or context caching lets you process the prefix once and store it, reducing costs and improving performance.

Sounds great, right? Let’s check the pricing mode. If your requests are more than 5 minutes apart, your cache will be cleared. If your requests are short, caching won’t be activated; if the cache hit rate is low, you will pay an extra, non-usage-based premium for cache writes. I highly recommend reading the “How Much Does Bedrock Prompt Caching Cost?” section in the article “Amazon Bedrock Prompt Caching”.

AI Just Took a Big Step Into Digital Health

🚀 AI is moving deeper into digital health – over the past week, both OpenAI and Anthropic have introduced major features aimed at bringing powerful AI capabilities into healthcare and life sciences. Links in the first comment

🔹 OpenAI: ChatGPT Health

OpenAI has launched ChatGPT Health, a dedicated health experience that lets users securely connect their medical records and wellness app data (e.g., Apple Health, Function, and MyFitnessPal) to get more informed insights about their health and wellness. The feature is designed to help people better interpret test results, prepare for doctor visits, and navigate everyday health questions — not replace clinicians. Enhanced privacy protections ensure that health chats and data remain isolated and encrypted, and that users retain full control over connections and data.

🔹 Anthropic: Claude for Healthcare & Life Sciences

Following an earlier announcement regarding Claude for Life Sciences, Anthropic introduced Claude for Healthcare alongside expanded life science capabilities, bringing its Claude AI into regulated medical and scientific use cases. This includes HIPAA-ready infrastructure and connectors to industry data sources (like CMS coverage rules, ICD-10 codes, and NPI registries) to support tasks such as prior authorizations, claims management, and clinical documentation. Claude can also summarize medical histories and explain test results in plain language. On the life sciences side, new integrations with clinical trial, preprint, and bioinformatics platforms aim to accelerate research workflows and regulatory documentation.

Both announcements show the AI industry racing into digital health with different focus areas. OpenAI’s move toward personalized health guidance for individuals complements Anthropic’s broader, enterprise-oriented tools for providers and researchers. Together, they raise exciting possibilities and important questions about regulatory standards, data privacy, and the role of AI in care delivery.

Bonus – GrantFlow – a grant management platform that automates discovery, planning, and application workflows for researchers and institutions.

4 AWS re:Invent announcment to check

AWS re:Invent 2025 took place this week, and as always, dozens of announcements were unveiled. At the macro level, announcing Amazon EC2 Trn3 UltraServers for faster, lower-cost generative AI training can make a significant difference in the market, which is primarily biased towards Nvidia GPUs. At the micro-level, I chose four announcements that I find compelling and relevant for my day-to-day.

AWS Transform custom – AWS Transform enables organizations to automate the modernization of codebases at enterprise scale, including legacy frameworks, outdated runtimes, infrastructure-as-code, and even company-specific code patterns. The custom agent applies those transformation rules defined in documentation, natural language descriptions, or code samples consistently across the organization’s repositories.

Technical debt tends to accumulate quietly, damaging developer productivity and satisfaction. Transform custom wishes to “crush tech debt” and free up developers to focus on innovation instead. For organizations managing many microservices, legacy modules, or long-standing systems, this could dramatically reduce the maintenance burden and risk and increase employees’ satisfaction and retention over time.

Partially complementary, AWS introduced 2 frontier agents in addition to the already existing Kiro agent –

- DevOps agent – an on-call / incident-response agent that integrates across monitoring, code repos, and service tickets to detect root causes and coordinate responses.

https://aws.amazon.com/blogs/aws/aws-devops-agent-helps-you-accelerate-incident-response-and-improve-system-reliability-preview/ - Security agent – an agent that proactively secures applications throughout the entire development lifecycle by conducting automated design and code reviews, and performing on-demand penetration testing

https://aws.amazon.com/blogs/aws/new-aws-security-agent-secures-applications-proactively-from-design-to-deployment-preview/

AWS Lambda Durable Functions – Durable Functions enable building long-running, stateful, multi-step applications and workflows – directly within the serverless paradigm. Durable functions support a checkpoint-and-replay model: your code can pause (e.g., wait for external events or timeouts) and resume within 1 year without incurring idle compute costs during the pause.

Many real-world use cases, such as approval flows, background jobs, human-in-the-loop automation, and cross-service orchestration, require durable state, retries, and waiting. Previously, these often required dedicated infrastructure or complex orchestration logic. Durable Functions enable teams to build more robust and scalable workflows and reduce overhead.

AWS S3 Vectors (General Availability) – Amazon S3 Vectors was announced about 6 months ago and is now generally available. This adds native vector storage and querying capabilities to S3 buckets. That is, you can store embedding/vector data at scale, build vector indexes, and run similarity search via S3, without needing a separate vector database. The vectors can be enriched with metadata and integrated with other AWS services for retrieval-augmented generation (RAG) workflows. I think of it as “Athena” for embeddings.

This makes it much easier and cost-effective for teams to integrate AI/ML features – even if they don’t want to manage a dedicated vector DB and reduces the barrier to building AI-ready data backends.

Amazon SageMaker Serverless Customization – Fine-Tuning Models Without Infrastructure – AWS announced a new capability that accelerates model fine-tuning by eliminating the need for infrastructure management. Teams can upload a dataset and select a base model, and SageMaker handles the fine-tuning pipeline, scaling, and optimization automatically – all in a serverless, pay-per-use model. This customized model can also be deployed using Bedrock for Serverless inference. It is a game-changer, as serving a customized model was previously very expensive. This feature makes fine-tuning accessible to far more teams, especially those without dedicated ML engineers.

These are just a handful of the (many) announcements from re:Invent 2025, and they represent a small, opinionated slice of what AWS showcased. Collectively, they highlight a clear trend: Amazon is pushing hard into AI-driven infrastructure and developer automation – while challenging multiple categories of startups in the process.

While Trn3 UltraServers aim to chip away at NVIDIA’s dominance in AI training, the more immediate impact may come from the developer- and workflow-focused releases. Tools like Transform Custom, the new frontier agents, and Durable Functions promise to reduce engineering pain – if they can handle the real, messy complexity of enterprise systems. S3 Vectors and SageMaker Serverless Customization make it far easier to adopt vector search and fine-tuning without adding a new operational burden.

5 interesting things (02/11/2025)

Measuring Engineering Productivity – Measuring engineering productivity is a topic that has been discussed as long as the field of engineering has existed. This post acknowledges the tension of measuring engineering work (where metrics can be easily manipulated). It proposes a pragmatic system of minimal burden, high visibility, and context-sensitive metrics, rather than focusing on lines of code.

https://justoffbyone.com/posts/measuring-engineering-productivity/

Stop Avoiding Politics – Politics usually have a bad name, but the article argues that avoiding the “politics” of an organization doesn’t remove politics; it just removes your ability to influence outcomes and let others decide for you. This is a helpful reminder that part of seniority is engaging in stakeholder dynamics, not just writing code.

https://terriblesoftware.org/2025/10/01/stop-avoiding-politics/

Team Dynamics after AI – This post critiques the rush to utilize AI to scale engineering artifact production and argues that what matters most remains the “illegible” human and team elements: context, feedback loops, diversity of roles, and the social glue that holds work together. I link it to the “Measuring engineering productivity” post in the sense that simply measuring throughput or artifacts might miss the hidden “team health” or context dimension.

https://mechanicalsurvival.com/blog/team-dynamics-after-ai/

Useful Engineering Management Artifacts – This is a practical collection of templates for various purposes, including team charters, career development plans, and decision briefs. It complements the productivity and team dynamics posts by providing actual artifacts you can use to operationalize some of the ideas.

https://bjorg.bjornroche.com/management/engineering-management-artifacts/

Stop Caring So Much About Your People – I find this post a bit weird. In the post “Radical Candor” era, it feels obvious that giving feedback is both essential and meaningful. I agree with the author’s point that leaders sometimes over-prioritize team happiness at the expense of organizational health, and I’d extend that further: over-protecting people from discomfort also hurts their own growth. As leaders, giving feedback is often uncomfortable, but it’s one of the most valuable things we can do – to help our people, our teams, our company, and even ourselves evolve.

https://avivbenyosef.com/stop-caring-so-much-about-your-people/

From Demo Hell to Scale: Two Takes on Building Things That Last

I recently came across two blog posts that made me think, especially in light of a sobering statistic I’ve seen floating around: a recent MIT study reports that 95% of enterprise generative AI pilots fail to deliver real business impact or move beyond demo mode.

One post is a conversation with Werner Vogels, Amazon’s long-time CTO, who shares lessons from decades of building and operating systems at internet scale. The other, from Docker, outlines nine rules for making AI proof-of-concepts that don’t die in demo land.

Despite their different starting points, I was surprised by how much the posts resonated with one another. Here’s a short review of where they align and where they differ.

Where They Agree

- Solve real problems, not hype – Both warn against chasing the “cool demo.” Docker calls it “Solve Pain, Not Impress”, while Vogels is blunt: “Don’t build for hype.” This advice sounds obvious, but it’s easy to fall into the trap of chasing novelty. Whether you’re pitching to executives or building at AWS scale, both warn that if you’re not anchored in a real customer pain, the project is already off track.

- Build with the end in mind – Neither believes in disposable prototypes. Docker advises to design for production from day zero—add observability, guardrails, testing, and think about scale early. Vogels echoes with “What you build, you run”, highlighting that engineers must take ownership of operations, security, and long-term maintainability. Both perspectives converge on the same principle: if you don’t build like it’s going to live in production, it probably never will.

- Discipline over speed – Both posts emphasize discipline over blind speed. Docker urges teams to embed cost and risk awareness into PoCs, even tracking unit economics from day one. Vogels stresses that “cost isn’t boring—it’s survival” and frames decision-making around reversibility: move fast when you can reverse course, slow down when you can’t. Different wording, same idea: thoughtful choices early save pain later.

Where They Differ

- Scope: the lab vs. the long haul – Docker’s post is tightly focused on how to build POCs in the messy realities of AI prototyping and how to avoid “demo theater” and make something that survives first contact with production. Vogels’ advice is broader, aimed at general engineering, technology leadership, infrastructure, decision-making at scale, and organization-level priorities. Vogels speaks from decades of running Amazon-scale systems, where the horizon is years, not weeks.

- Tactics vs. culture – Docker’s advice is concrete and technical: use remocal workflows, benchmark early, add prompt testing to CI/CD. Vogels is less about specific tools and more about culture: engineers owning what they build, organizations learning to move fast on reversible decisions, and leaders setting clarity as a cultural value. Docker tells you what to do. Vogels tells you how to think.

- Organizational Context and Scale – Docker speaks to teams fighting to get from zero to one—making PoCs credible beyond the demo stage. Vogels speaks from AWS’s point of view, where the challenge is running infrastructure that millions rely on. Docker’s post is about survival; Vogels is about resilience at scale.

What strikes me about these two perspectives is how perfectly they complement each other. Docker’s advice isn’t really about AI – it’s about escaping demo hell by building prototypes with production DNA from day one. Vogels tackles what happens when you actually succeed: keeping systems reliable when thousands depend on them. They’re describing the same journey from different ends. Set up your prototypes with the right foundations, and you dramatically increase the odds that your product will one day face the kinds of scale and resilience questions Vogels addresses.

AI, Paradigm Shifts, and the Future of Building Companies

Over the past few months, I have been constantly reading conversations about how Generative AI will reshape software engineering. On LinkedIn, Twitter, or in closed professional groups, engineers and product leaders debate how tools like Cursor, GitHub Copilot, or automated testing frameworks will impact the way software is built and teams are organized.

But the conversation goes beyond just engineering practices. If we zoom out, AI will not only transform the workflows of software teams but also the structure of companies and even the financial models on which they are built. This kind of change feels familiar – it echoes a deeper historical pattern in how science and technology evolve.

Kuhn’s Cycle of Scientific Revolutions

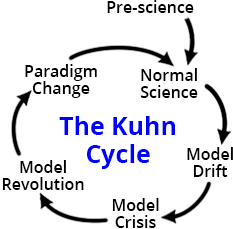

During my bachelor’s, I read Thomas Kuhn’s The Structure of Scientific Revolutions. Kuhn argued that science does not progress in a linear, step-by-step manner. Instead, it moves through cycles of stability and disruption. The Kuhn Cycle1, as reframed by later scholars, breaks this process into several stages:

- Pre-science – A field without consensus; multiple competing ideas.

- Normal Science – A dominant paradigm sets the rules of the game, guiding how problems are solved.

- Model Drift – Anomalies accumulate, and cracks in the model appear.

- Model Crisis – The old framework fails; confidence collapses.

- Model Revolution – New models emerge, challenging the old order.

- Paradigm Change – A new model wins acceptance and becomes the new normal.

The Kuhn Cycle Applied to Software Development

Normal Science

For decades, software engineering has operated under a shared set of practices and beliefs:

- Clean Code & Best Practices – DRY, SOLID, Unit Testing, Peer Reviews.

- Agile & Scrum – Iterative sprints and ceremonies as the “right” way to build products.

- DevOps & CI/CD – Automation of builds, deployments, and testing.

- Organizational Structure – Specialized roles (frontend, backend, QA, DevOps, PM) and a belief that more engineers equals more output.

The underlying assumption is hire more engineers + refine practices → better and quicker software.

Model Drift

Over time, cracks began to show.

- The talent gap – demand for software far outstrips available developers.

- Velocity mismatch – Agile rituals can’t keep pace with market demands.

- Complexity overload – Microservices and massive codebases create systems that are too complex for a single person to comprehend fully.

- Knowledge silos – onboarding takes months, and institutional knowledge remains fragile.

These anomalies signaled that “hire more engineers and improve processes” was no longer a sustainable model.

Model Crisis

The strain became obvious:

- Even tech giants with thousands of engineers struggle with code sprawl and coordination overhead.

- Brooks’ Law bites – adding more people to a project often makes it slower.

- Business pressure grows – leaders demand faster iteration, lower costs, and higher adaptability than human-only teams can deliver.

- Early AI tools, such as GitHub Copilot and ChatGPT, reveal something provocative – machines can generate boilerplate, tests, and documentation in seconds – tasks once thought to be unavoidably human.

This is where many organizations sit today – patching the old paradigm with AI, but without a coherent new model.

Model Revolution

A new way of working begins to take shape. Here are some already visible in experimenting, we can all see around us –

- AI-first engineering – using AI agents for scaffolding code, generating tests, or refactoring large systems. Humans act as curators, reviewers, and high-level designers.

- Smaller, AI-augmented teams

- New roles and workflows – QA shifts toward system-level validation; PMs focus less on ticket grooming and more on problem framing and prompting.

- Org structures evolve – less siloing by specialization, more “AI-augmented full-stack builders.”

- Economics shift – productivity is no longer headcount-driven but iteration-driven. Cost models change when iteration is nearly free.

Paradigm Change

In the coming years, some of the ideas above, and probably additional ideals, could stabilize as the “normal science” of software development and organizational building. But we are not yet there. Once we get there, today’s experiments will feel as obvious as Agile sprints or pull requests do now.

We are in the midst of model drift tipping into crisis, with glimpses of revolution already underway. Kuhn’s lesson is that revolutions are not just about better tools – they’re about shifts in worldview. For AI, the shift might be that companies will no longer be limited by headcount and manual processes but by their ability to ask the right questions, frame the correct problems, and adapt their models of value creation.

We are moving toward a future where the shape of companies, not just their software stacks, will look radically different, and that’s an exciting era to be a part of.

LLM Debt: The Double-Edged Sword of AI Integration

Have you noticed how half the posts on LinkedIn these days feel like they were written by an LLM – too many words for too little substance? Or how product roadmaps suddenly include “AI features” that nobody asked for, just because it sounds good in a pitch deck? Or those meetings where someone suggests “let’s use GPT for this,” when a simple SQL query, an if-statement, or a much simpler ML model would do the job?

Laurence Tratt recently coined the term LLM inflation to describe how humans use LLMs to expand simple ideas into verbose prose, only for others to shrink them back down. That concept got me thinking about a related phenomenon: LLM debt.

LLM debt is the growing cost of misusing LLMs — by adding them where they don’t belong and neglecting them where they could help.

We’re all familiar with technical debt, product debt, and design debt1 — the shortcuts or missed opportunities that slow us down over time. Similarly, organizations are quietly accumulating LLM debt.

So, what does LLM debt look like in practice? It’s a double-edged liability:

- Overuse: Integrating LLMs where they’re unnecessary adds latency, complexity, cost, and stochasticity to systems that could be simpler, faster, and more reliable without them. For example, sending every API request through a multimillion-parameter model when a simple regex or deterministic logic would suffice.

- Underuse: Failing to adopt LLM-based tools where they could genuinely help results in wasted effort and missed opportunities. Think of teams manually triaging support tickets, writing repetitive documentation, or analyzing text data by hand when an LLM could automate much of the work.

Like product or technical debt, a small amount of LLM debt can be strategic: it allows experimentation, faster prototyping, or proof-of-concept development. However, left unmanaged, it compounds, creating systems that are over-engineered in some areas and under-leveraged in others, which slows product evolution and innovation. Same as other types of debt, it should be owned and managed.

LLMs are powerful, but they come with costs. Just as we track and manage technical debt, we need to recognize, measure, and pay down our LLM debt. That means asking tough questions before adding LLMs to the stack, and also being bold enough to leverage them where they could provide real value.

If LLM inflation showed us how words can expand and collapse in unhelpful cycles, LLM debt shows us how our systems can quietly accumulate inefficiencies that slow us down. Recognizing it early is the key to keeping our products lean, intelligent, and future-ready.

Things I recently built with my daughter

My daughter just finished first grade and is about to turn seven. One day, she noticed me playing a math puzzle on a news site and asked if she could join. I told her that when an easy one came along, I’d be happy to solve it with her.

A few days passed. She asked again.

I didn’t want to say no, so I offered to sketch a similar game on paper. But designing a good puzzle turned out to be more challenging than expected. I scribbled a lightweight version instead—and that sparked an idea. I asked if she’d like to build a game together.

1. Math Grid

This was our first game. It’s a visual puzzle: players are given a grid with some numeric constraints on rows and columns, and the goal is to fill it in correctly. Inspired by logic puzzles like Sudoku and Kakuro, the constraints are simplified for early elementary-level arithmetic, so it stays approachable while still encouraging reasoning.

2. Number Detector

Later, while solving exercises from her school workbook, we came across number riddles. This inspired our second app. In Number Detector, the player is given partial clues (like a number’s sum of digits or a multiple constraint) and has to figure out the correct number from a limited set.

We added randomness and regeneration to keep things fresh, making the practice repeatable and varied.

3. Triangle Tactics

A month later, while playing a pen-and-paper strategy game, she casually said: “Let’s make it into an app—like we did in the old days.” 🙂

Her request blew my mind.

We built Triangle Tactics, a simple turn-based game where players connect dots to form triangles, and the player who forms the most triangles wins. What surprised me most was the technical challenge—not in the game logic, but in rendering the dots and lines correctly. It was solved only after switching to a different LLM model than the default.

Reflections

These experiments turned into more than just games. They became opportunities to:

- Understand the importance of English: We worked in English—despite it not being our native language—and talked about why fluency matters, especially when working with tools, prompts, or documentation.

- Explore computers as collaborators: “What do you mean it misunderstood the prompt?”, “How should we ask to get the result we want?”

- Practice QA thinking: “Why isn’t this button working?”, “Is it behaving the way we expected?”

- Enjoy quality time: A fun excuse to step outside our usual routines and build something together.

AWS has entered the building

AWS has released several notable announcements within the LLM ecosystem over the last few days.

Introducing Amazon S3 Vectors (preview) – Amazon S3 Vectors is a durable, cost-efficient vector storage solution that natively supports large-scale AI-ready data with subsecond query performance, reducing storage and query costs by up to 90%.

Why I find it interesting –

- Balancing cost and performance – i.e., storing on a database is more expensive but yields better results. If you know what the “hot vectors” are, you can store them in the database and store the rest in S3.

- Designated buckets – it started with table buckets and has now evolved to vector buckets. Interesting direction.

Launch of Kiro – the IDE market is on fire with OpenAI’s acquisition falling apart, Claude code and cursor competition, and now Amazon reveals Kiro with the promise – “helps you do your best work by bringing structure to AI coding with spec-driven development”

Why I find it interesting –

- At first, I wondered why AWS entered this field, but I assume it is a must-have these days, and might lead to higher adoption of their models or Amazon Q.

- The different IDEs and CLI tools are influenced by each other so it will be interesting to see how a new player influences this space.

Strand agents are now at v1.0.0 – Strand Agents are an AWS open-source SDK that enables building and running AI agents across multiple environments and models, with many pre-built tools that are easy to use.

Why I find it interesting –

- The bedrock agents interface was limiting for a production-grade agent, specifically in terms of deployment modes, model support, and observability. Strand agents open many more doors.

- There are many agent frameworks out there (probably two more were released while you read this post). Many of them experience different issues when working with AWS Bedrock. If you are using AWS as your primary cloud provider, it should be a leading candidate.