I analyzed 1835 hospital price lists so you didn’t have to – this post had a few interesting things. First, learning about CMS’s price transparency law. In Israel this is a non-issue since the healthcare system works differently, and most of the procedures are covered by the HMOs so there is no such concern. I would be interested in further analysis about the missing or non-missing prices. I.e., for which CPT codes most hospitals have prices, for which CPT codes most hospitals don’t have prices, can we cluster them (e.g. cardio codes? women’s health? procedures usually done on elder people?). This dataset has great potential, and I agree with most of the points in the “Dead On Arrival: What the CMS law got wrong” section.

https://www.dolthub.com/blog/2022-07-01-hospitals-compliance/

How to design better APIs – there are several things I liked in this post – first, it is written very clearly and gives both positive and negative examples. Second, it is language agnostic. That last tip – “Allow expanding resources” was mind-blowing to me, so simple to think of and I never thought of adding such an argument. Now I miss a cookie-cutter template to implement all that good advice.

https://r.bluethl.net/how-to-design-better-apis

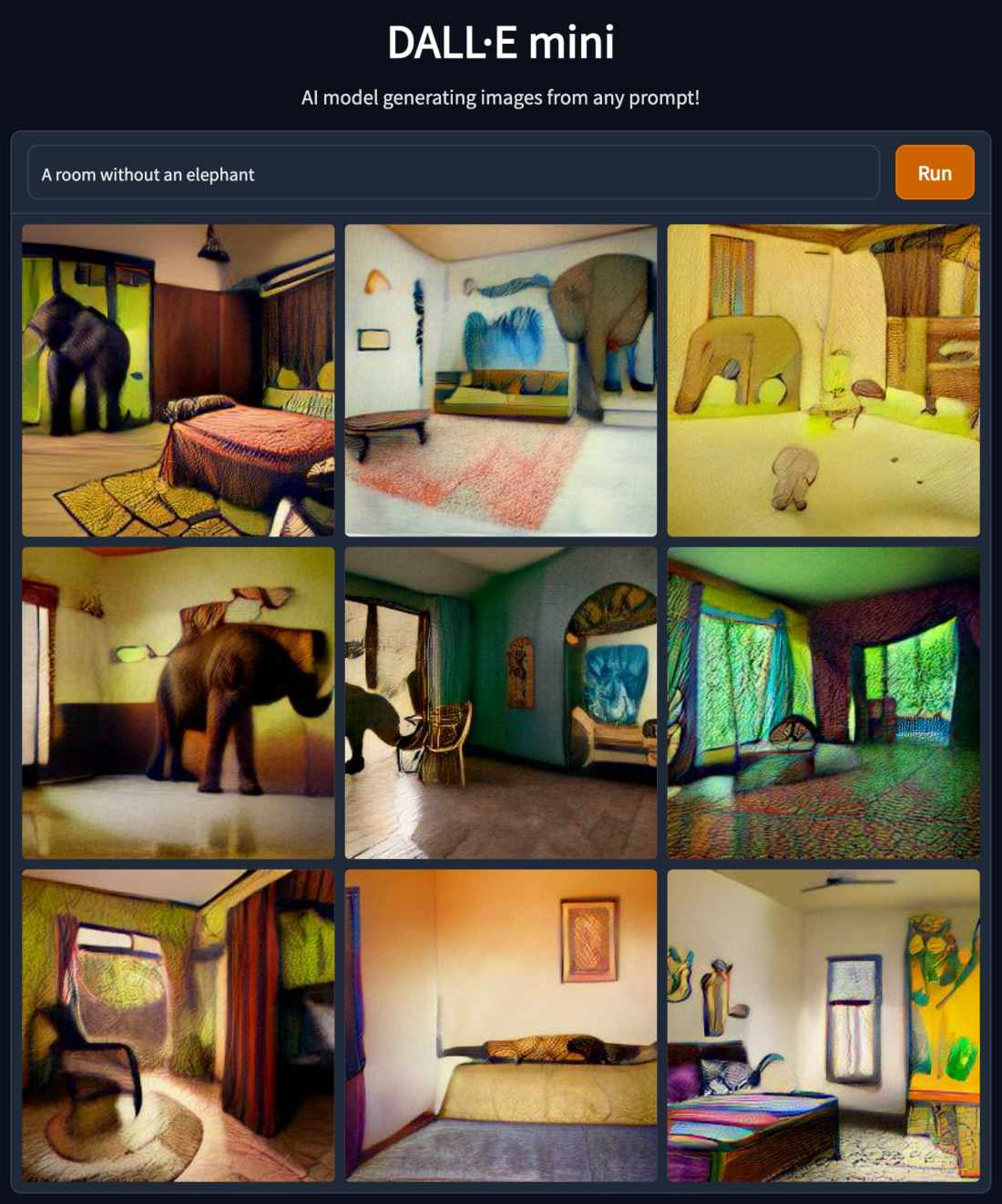

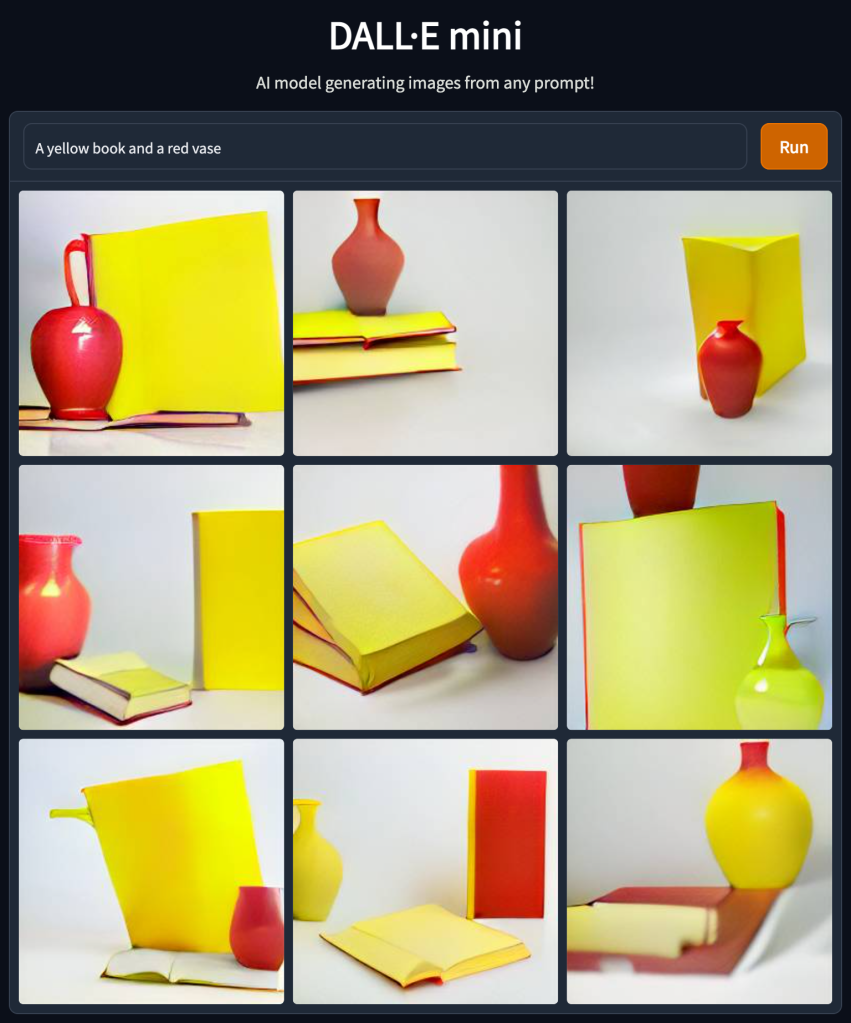

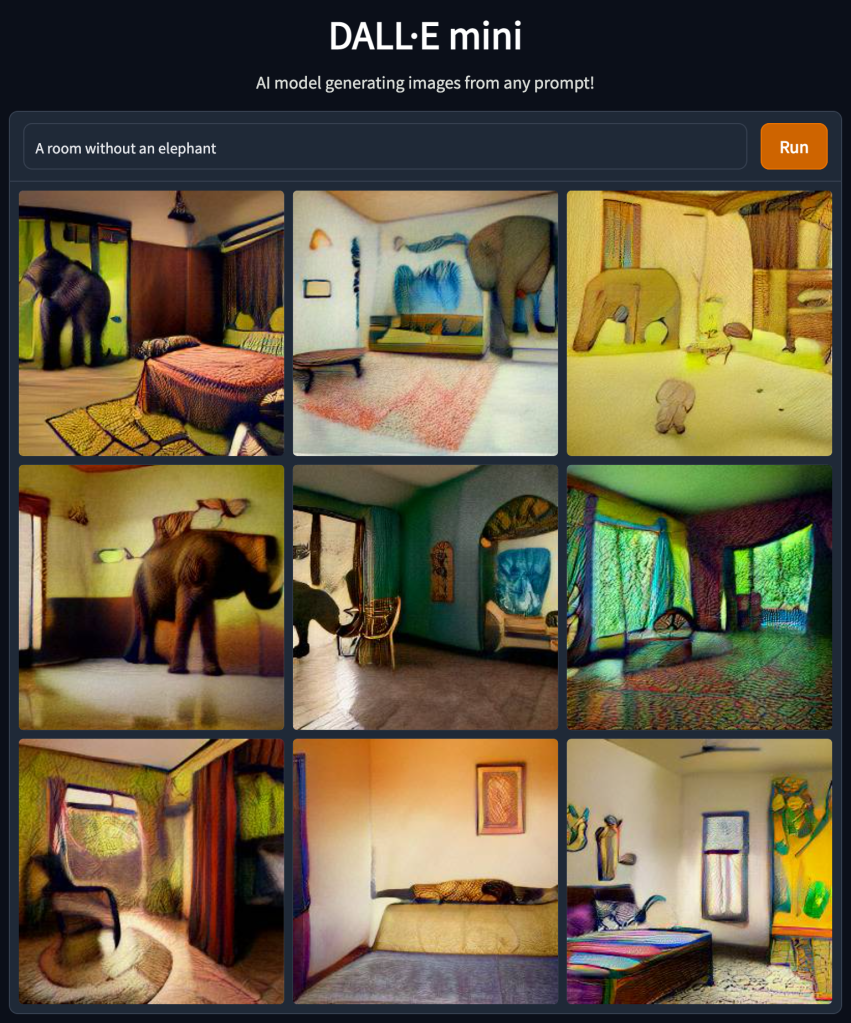

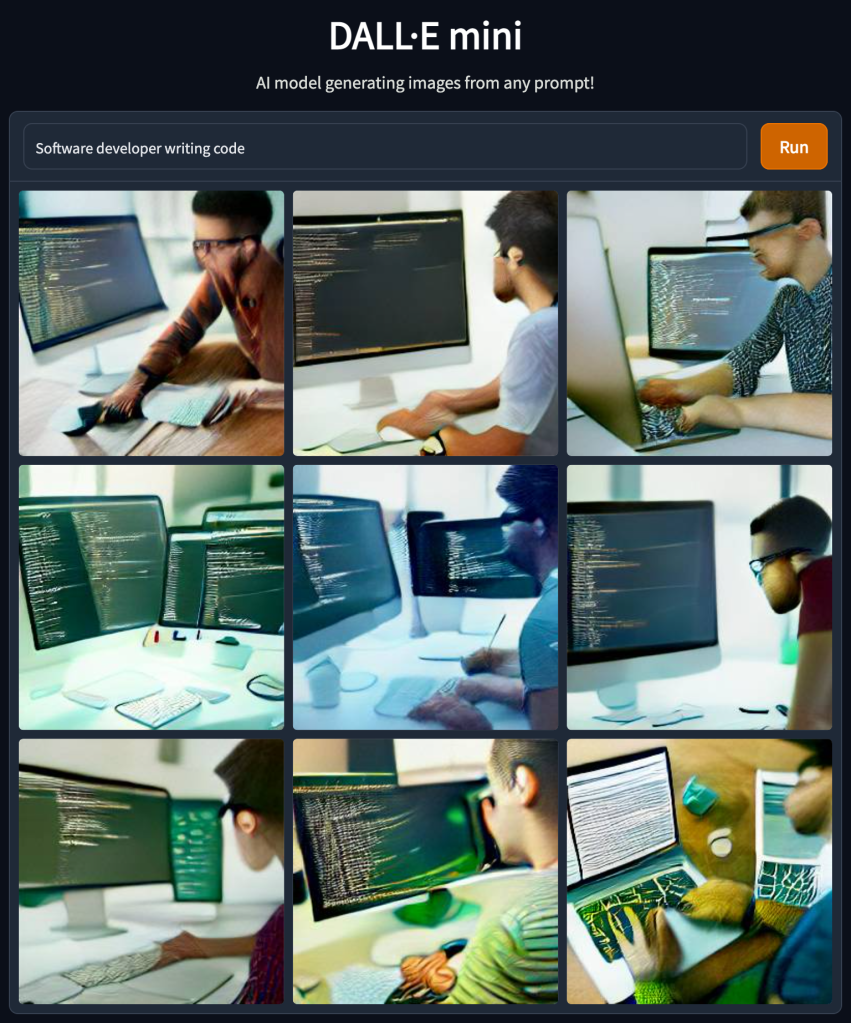

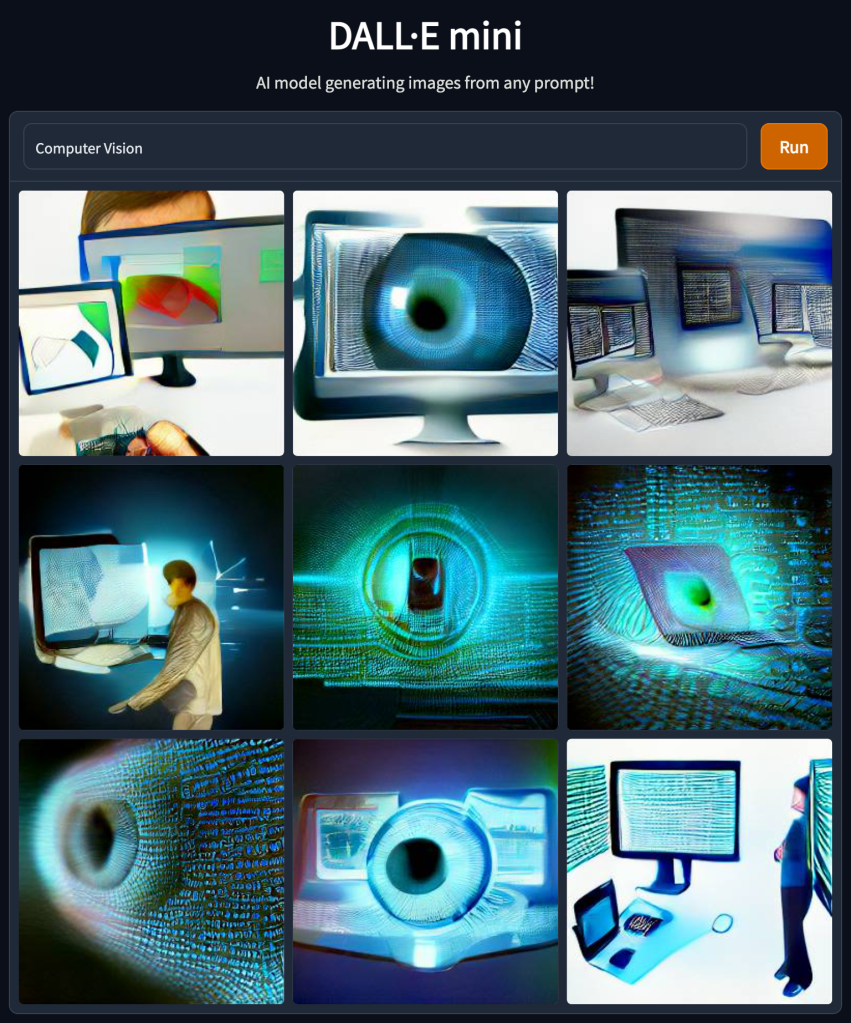

min(DALL-E) – “This is a fast, minimal port of Boris Dayma’s DALL·E Mega. It has been stripped down for inference and converted to PyTorch. The only third-party dependencies are NumPy, requests, pillow, and torch”. Now you can easily generate images using min-dalle on your machine (but it might take a while),

https://github.com/kuprel/min-dalle

Bonus – https://openai.com/blog/dall-e-2-pre-training-mitigations/

4 Things I Learned From Analyzing Menopause Apps Reviews – Dalya Gartzman, She Knows Health CEO, writes about 4 lessons she learned from analyzing Menopause Apps Reviews. I think it is interesting in 2 ways – app reviews are first, as a product-market fit strategy, to see what users are telling, asking, or complaining about in related.

Inconsistent thoughts on database consistency – this post discusses the many aspects and definitions of consistency and how it is used in different contexts. I absolutely love those topics. Having said that, I wonder if people hold those discussions in real life and not just use common cloud-managed solutions encapsulating some of those concerns.