Three recent incidents that should make all of us pause (links in the first comment):

→ A hallucinated npx command spread through a single LLM-generated skill file to 237 repositories. Real agents executed it. A researcher claimed the package name before an attacker could.

→ 230+ malicious skills uploaded to OpenClaw’s ClawHub in days. The #1-ranked skill was silently exfiltrating data and injecting prompts to bypass safety guidelines. Thousands of downloads before anyone noticed.

→ An audit of 2,890+ OpenClaw skills found 41.7% contain serious security vulnerabilities.

This isn’t a niche problem. If you use Claude Code, Cursor, Copilot, or any modern coding agent, you’re likely installing multiple skills a week. They are recommended by colleagues, appear in blog posts, and are bundled into project templates. The install command is one line. The trust is implicit.

Skills blur the line between configuration and code, but we treat them like documentation. A SKILL.md file can contain natural-language instructions, executable scripts, and package dependencies. There’s no clear boundary where “docs” ends and “code” begins. No lockfile. No integrity checks. No verified publisher identity. The skills CLI has a package-lock.json for its own dependencies – just not for the skills you install.

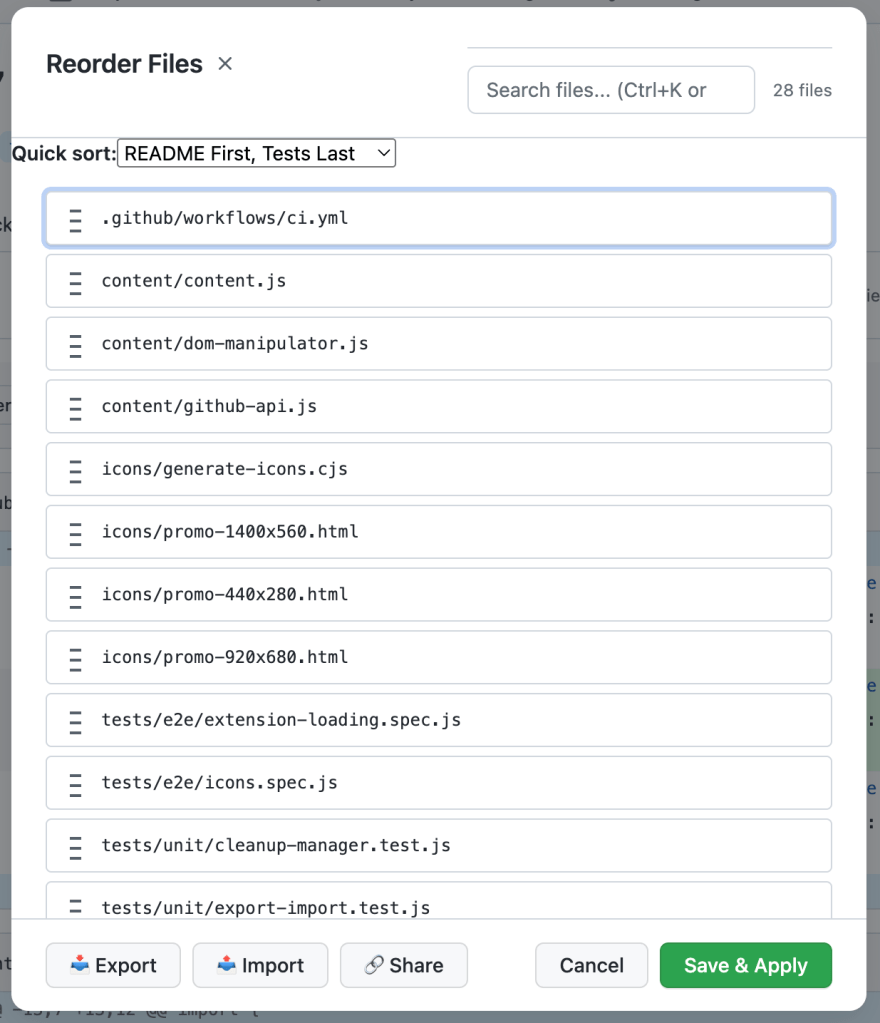

So what should we do today? At a minimum, read the skill files before installing. It takes 2 minutes and is the equivalent of reviewing a PR. Beyond that, copy and commit your skills folder to git – your repo becomes your lockfile. Treat any skill update like a dependency upgrade: review the diff before merging, also for testing and quality purposes.

The tools are coming – Longer term, this ecosystem needs what npm took 6 years to build: lockfiles, signed packages, verified publishers, and scanners that flag malicious instructions before they reach your agent. Cisco developed an open-source skill file scanner.

Links –

Snyk Finds Prompt Injection in 36%, 1467 Malicious Payloads in a ToxicSkills Study of Agent Skills Supply Chain Compromise – https://snyk.io/blog/toxicskills-malicious-ai-agent-skills-clawhub/

Update #40: Agent Skill Security Issues – https://maxcorbridge.substack.com/p/update-40-agent-skill-security-issues

Agent Skills Are Spreading Hallucinated npx Commands – https://www.aikido.dev/blog/agent-skills-spreading-hallucinated-npx-commands

Over 41% of Popular OpenClaw Skills Found to Contain Security Vulnerabilities – https://www.esecurityplanet.com/threats/over-41-of-popular-openclaw-skills-found-to-contain-se

Skill Scanner – https://github.com/cisco-ai-defense/skill-scanner